Chris Agnew, Cristina Barnard, Elizabeth Kozleski, Victor Lee, Susanna Loeb, Lauren Ziegler

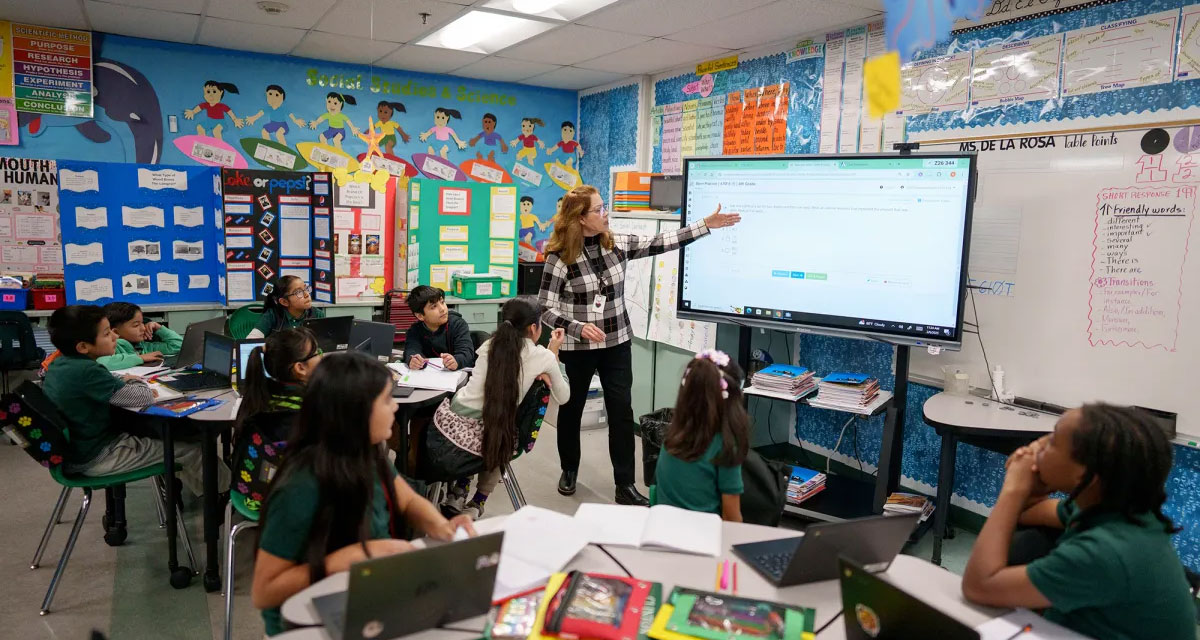

AI is already part of daily life in California schools. Teachers are using it to draft lesson plans, adapt materials, and manage administrative tasks. Students are using it to brainstorm, summarize, solve problems, and draft writing. School and district leaders are exploring how these tools might support instruction, communication, data use, and operations. This adoption has moved quickly, often faster than the development of clear policies, shared expectations, and sustained professional learning.

AI creates real possibilities as well as real concerns. These tools may help schools provide more targeted instruction, more timely feedback, and better access to learning for students with diverse needs. They also raise concerns about misinformation, bias, privacy, and patterns of use that may weaken revision, persistence, and sustained thinking. The research base is still limited, and current evidence does not yet show the long-term effects of AI on student learning, motivation, critical thinking, or well-being across settings and student groups.

The technology studies in Getting Down to Facts begin with student development. Schools are responsible for helping students build knowledge, reasoning, curiosity, agency, persistence, and the ability to work with others. The studies summarized here consider AI in relation to those capacities and to the conditions that support their development. Starting with student capacities keeps the focus on the most important educational experiences for California schools.

This brief draws on the Getting Down to Facts III technical reports to describe AI use in California schools. The central policy question is how schools and systems will shape AI use. AI may help make learning environments more responsive, flexible, and centered on students’ needs, but those possibilities depend on stronger guidance, professional learning, data protections, and implementation capacity than most schools currently have. California schools are already making decisions about instruction, privacy, academic integrity, communication, and procurement without strong shared infrastructure to guide them.

Key Findings

1. New technologies, particularly AI, present both substantial opportunities and substantial risks for California’s education system.

Recent advances in AI, including generative tools, adaptive systems, and data-driven platforms, could change teaching, learning, and school operations in significant ways. These tools may help schools provide more personalized instruction, faster feedback, and more flexible supports for students. They also raise serious concerns about misinformation, bias, privacy, overreliance, and possible effects on students’ motivation, critical thinking, and well-being.

2. If developed and used effectively, AI tools can help California address persistent barriers that limit the spread of effective educational practices.

Research has already identified the learning experiences that best support student development. In many schools, those practices remain hard to sustain because of organizational constraints, limited capacity, and uneven access to resources. AI could help reduce some of those barriers by supporting coordination, adaptation, and access to information that teachers and schools often lack the time or staff to provide consistently.

3. California schools are already using powerful AI tools, often without sufficient guidance, policies, or system-level support.

AI use is already widespread in California schools, especially for instructional planning and administrative work. Many schools and districts, however, still do not have formal policies for AI use. Guidance on safe, effective, and equitable implementation remains limited, leaving educators and local leaders to make consequential decisions with uneven support.

4. Educators and system leaders are seeking significantly more guidance and professional learning, both on how to use AI effectively and how to mitigate its risks.

Across the state, many educators report that they do not feel well prepared to use AI in teaching and learning. They are asking for clearer policies, stronger professional development, and more support on issues such as academic integrity, privacy, bias, and the role AI should play in student learning. Students also have limited access to AI literacy instruction, leaving many to use these tools without much formal preparation.